Back to New Tab

Security Teams Hit The Brakes As AI Agents Outrun Identity Controls

Enterprise Security

Vineet Love, VP and Deputy Head of Cybersecurity Practice at DigitalNet.ai, warns that shadow AI poses significant security risks, and organizations need to apply traditional human IAM and governance controls to agents.

Make New Tab News one of your go-to sources on Google

Everyone is treating agents as human identities without physical bodies. That's exactly why identity is becoming one of the biggest bottlenecks in enterprise AI adoption.

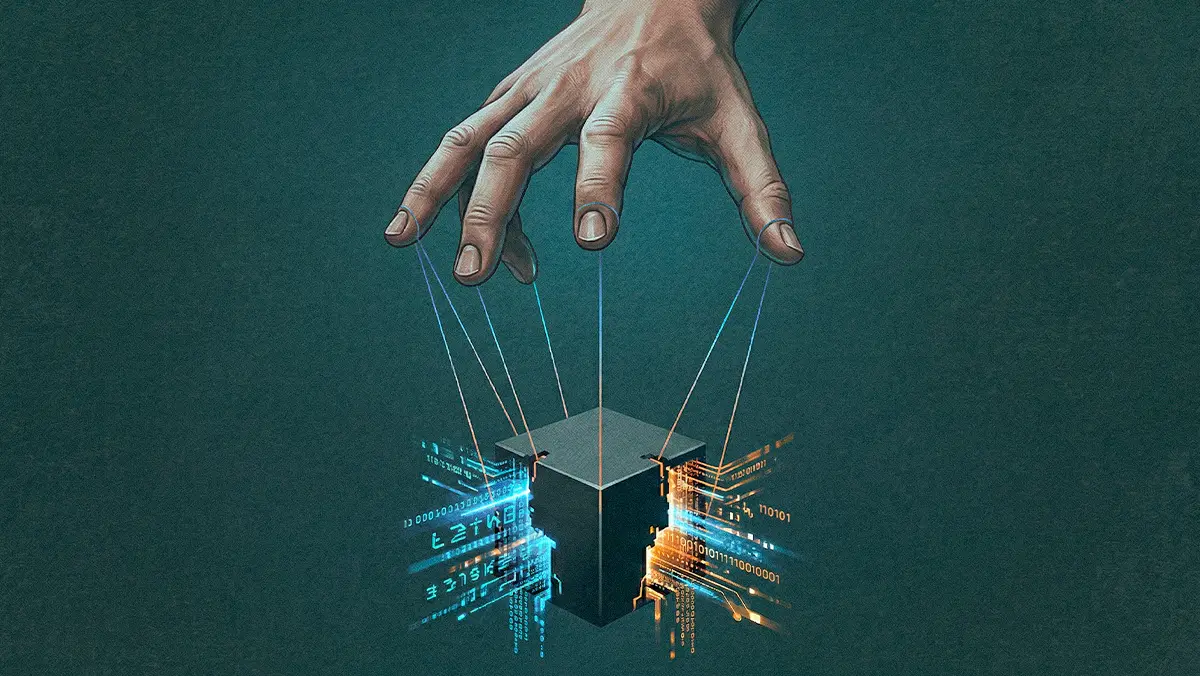

Giving a piece of software a task is easy. Figuring out how to give it an ID badge, track its movements, and revoke its access is the real problem, and security teams are quietly hitting the brakes as compliance and privacy issues arise. One of the central challenges for these teams is figuring out how to apply traditional Identity and Access Management principles to a fast-growing set of non-human actors.

Vineet Love sees the resulting friction firsthand. As VP and Deputy Head of Cybersecurity Practice for North America at DigitalNet.ai, he advises Fortune 500 boards and C-suites on cyber risk and enterprise security. He also leads the cybersecurity product vision for ATLAS, where his team focuses on scaling agentic AI capabilities and securing non-human actors across enterprise environments.

As barriers to entry fall, developers and security leaders can build bespoke scanning tools over a single weekend. Because of that velocity, many organizations are struggling to manage the resulting AI agent sprawl, and this problem is compounded by antiquated approaches to handling strategies like identity and access management. "Everyone is treating agents like human identities without a physical body," Love says. "That's exactly why identity is becoming one of the biggest bottlenecks in enterprise AI adoption." Now, where even an AI agent with the right permissions can wipe out a database, reality is pushing both vendors and customers to tighten guardrails.

Capping the count: Love notes that some vendors are deliberately limiting their agent footprint to maintain customer confidence. “CrowdStrike released its Charlotte AI platform at RSA this year, and they only have 12 agents in the identity space. All of these software companies are now limiting agents. Having too many is exactly where the identity problem arises.”

Mad men and machines: One immediate administrative bottleneck is shadow AI. Non-technical departments occasionally bypass IT to solve everyday problems, often using AI tools and browsers without IT oversight. That can create a visibility gap, especially when human resources or finance teams begin integrating Copilot into existing enterprise workflows, such as CRMs. With no widely adopted tool that can instantly map every agent, Love worries that the move to shadow AI is leaving security teams in a lurch. “Now there is shadow AI, where a marketing team is using Claude to develop products. These agents are getting spun up by marketing, HR, and finance while IT is completely blindsided.”

The real headache begins when new agentic technology collides with older infrastructure and traditional security. As organizations try to bolt new AI capabilities onto existing enterprise infrastructure, they quickly hit the limits of securing next-generation agents atop poorly configured, decade-old directory setups. The resulting friction frequently prompts a deeper review and cleanup of those baseline foundations.

Directory decay: Rather than reinventing access control from scratch, Love suggests that organizations replicate proven human IAM concepts. “The fundamentals will remain the same: least privilege, segregation of duties, and the maker-checker process. Nothing is changing on that."

Replicating the routine: Once those fundamentals are established, the lifecycle itself mirrors what security teams have managed for years — just applied to a different kind of actor. Love says that “Machine identity will also go through steps from user commissioning to user decommissioning, and all the policies must be updated the way user policies get updated.”

The transition to machine identity lifecycles is already fracturing the market. Many legacy vendors are racing to add AI features while newer providers are building agentic layers that sit across existing infrastructure to surface context and prioritize risk without ripping and replacing core systems. In both cases, most new agents still need to be provisioned, monitored, and eventually decommissioned in a controlled way.

Love often advises teams to start the practical work of governance well before an agent touches production. When tracing the origins of rogue agents or compromised access, the first questions are usually "which employee provisioned the tool, and what guardrails were in place?"

Human at the helm: Because legacy risk assessments struggle to capture autonomous behavior, Love says that many CISOs are enforcing the maker-checker process at the human level, backed by strict testing and definitions of agent permissions. “When any product or vendor allows you to create agents, the right question is whether that agent is getting created within the guardrails of that particular product. Are we giving read or write access? What exactly is it supposed to do?”

Authoritative guides like the NIST AI Risk Management Framework and the OWASP Top 10 for LLM Applications are becoming key reference points for teams seeking to structure these controls. Love notes that adopting them is only the starting point; the real work is operationalizing them through rigorous proof-of-concept demonstrations and training. Modernizing identity and access management for non-human actors is now showing up on board agendas, as directors weigh the reputational risks of poorly governed AI projects.

Demanding the receipts: The vendor side of the equation matters too. "One of the key questions when anyone is buying the product and technology is to ask the vendor for the application security scan report," he says. "We need to see how your agents and code are actually secured."

Without that discipline, the consequences extend well beyond the security team. Love argues that enterprise AI security is now a governance and identity story before it is a model story, and that weak onboarding, insufficient testing, and loose controls are what turn AI projects into headlines. "That is where initial GRC becomes very, very important in the AI space," he says. "If we do a loose job in the initial phase, and an agent goes wrong, the company becomes a media story."