Back to New Tab

Report says majority of employees embrace AI unsupervised, leaving companies vulnerable

Enterprise Security

A new EisnerAmper survey reveals 80% of employees use AI with little to no company oversight, posing security risks.

A new survey from EisnerAmper reveals a massive gap between widespread, unsupervised AI use by staff and the near-total lack of formal oversight from employers, creating a ticking time bomb for security and workplace dynamics.

Don't ask, don't tell: The report found that an astounding 80% of staff use AI with little to no company supervision. While more than a third of employees are frequent users, only 36% say their company has a formal AI policy, and a mere 22% report their usage is actively monitored. That isn't stopping them: more than a quarter admit they would use the tech even if it were banned.

Bring your own breach: This has led to a surge in unsanctioned AI use, with a majority of workers (60%) relying on free, public tools for their jobs. The practice creates a massive security risk, as employees have been known to paste sensitive company data into external platforms.

Despite the risks, employees are bullish on AI, with more than three-quarters saying it makes them more productive. While most claim they use the time saved to get more work done, others admit to taking a walk or a longer lunch. Now, after developing these new skills on the job, nearly three-quarters of them want to get paid for it.

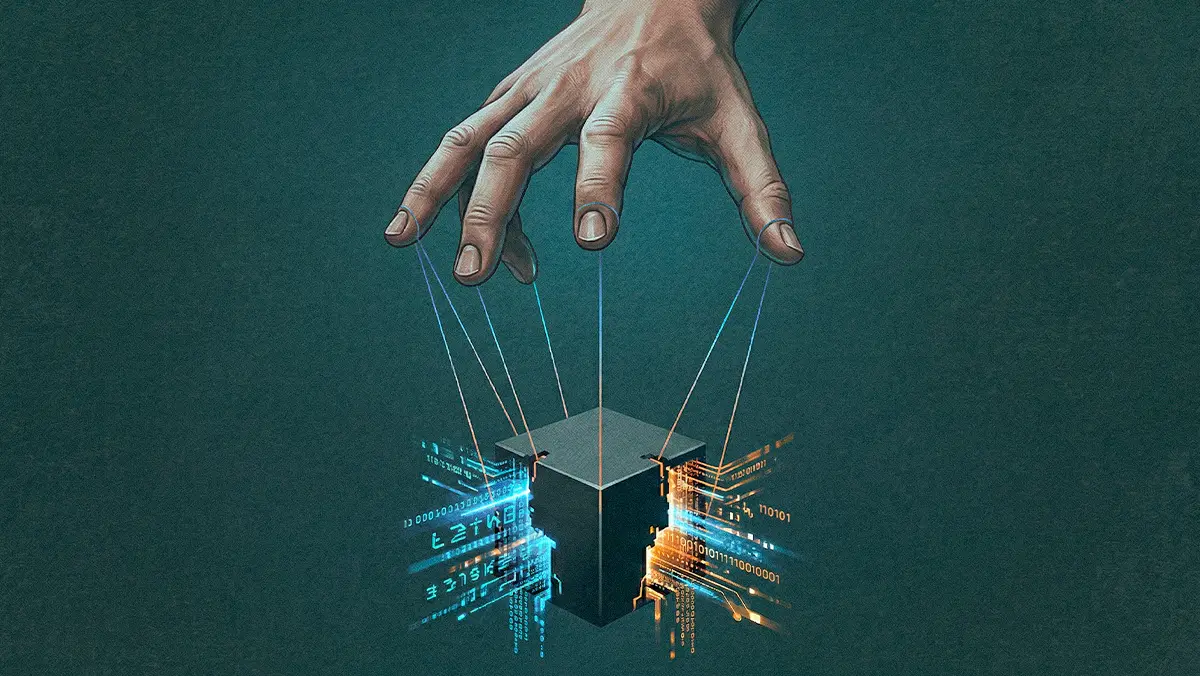

Companies are no longer in control of AI adoption. The workforce is integrating it from the ground up, forcing employers into a reactive position where they must now grapple with security risks, policy vacuums, and new compensation demands.

Also on our radar: The EisnerAmper report also revealed a sharp generational divide in AI sentiment, with younger workers being twice as happy using the tech as their older colleagues. Elsewhere, employees seem to welcome AI in the onboarding process but are deeply divided on its use in performance reviews.