Back to New Tab

New Report Says Workers and Execs Alike are Breaking Their Own Rules on AI Usage

Enterprise Security

According to a recent report, over half of U.S. office workers are willing to violate company AI policies to ease their workload, posing security risks.

More than half of U.S. office workers are willing to violate company AI policy to make their jobs easier, creating significant internal security risks, according to a new report from AI security firm CalypsoAI. The findings reveal a workforce that is now routinely circumventing the very guardrails put in place to manage the technology.

- Trust in the machine: Workplace trust is fundamentally shifting away from people and toward AI, with nearly half of employees now trusting algorithms more than their own coworkers. The preference is so strong that over a third would rather report to an AI manager, and a third say they would quit if their employer banned AI altogether.

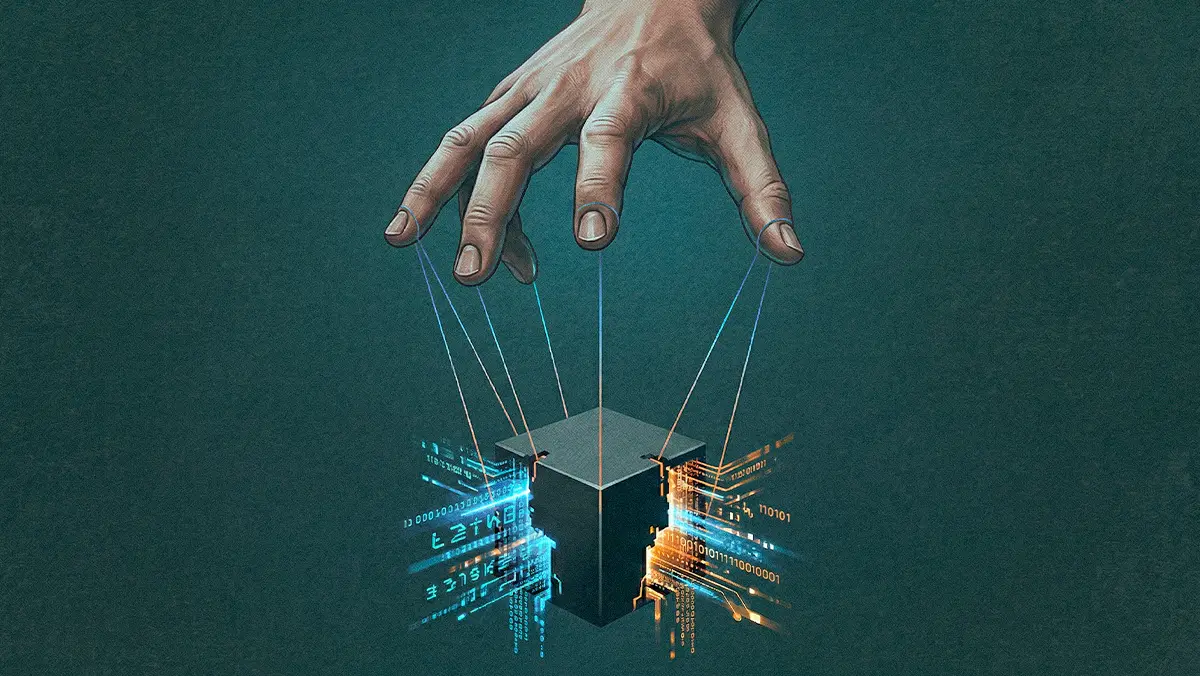

- Do as I say, not as I do: The disregard for protocol extends all the way to the top. More than two-thirds of C-suite executives would bypass internal policies to use AI, yet many are operating in the dark. Nearly 40% of business leaders also admitted they don’t know what an AI agent is.

- Fox guarding the henhouse: Perhaps most alarmingly, a similar dynamic is playing out among the people in charge of enforcement. Nearly 60% of security professionals say they trust AI more than their colleagues—a sentiment that helps explain why more than 40% of them knowingly use AI against company policy.

"We know inappropriate use of AI can be catastrophic for enterprises, and this isn't a future threat — it's already happening inside organizations today," said CalypsoAI CEO Donnchadh Casey in a release. The problem of "shadow AI" is particularly acute in highly regulated industries like finance and healthcare, echoing the early days of cloud adoption where unsanctioned tech use was rampant. But it's not all defiance; many workers are simply looking for guidance, with a majority indicating they would welcome more education on AI risks.