Back to New Tab

NSA, FBI, and global allies unveil joint AI data security playbook to combat evolving threats

Enterprise Security

U.S. security agencies and international partners released a Cybersecurity Information Sheet to secure AI data.

Top U.S. security agencies, including CISA, the NSA, and the FBI, alongside international partners, have released a new Cybersecurity Information Sheet aimed at safeguarding the data used to train and operate AI systems, stressing that reliable AI hinges on secure data. The move comes as organizations rapidly adopt AI, sometimes with "little forethought or oversight".

Data's dark side: The core concern, beyond just fending off hackers, is the fundamental reliability of AI itself. The joint recommendations pinpoint three major vulnerabilities: compromised data supply chains, the insidious threat of maliciously "poisoned" data, and "data drift," where AI models degrade as real-world information outpaces their training.

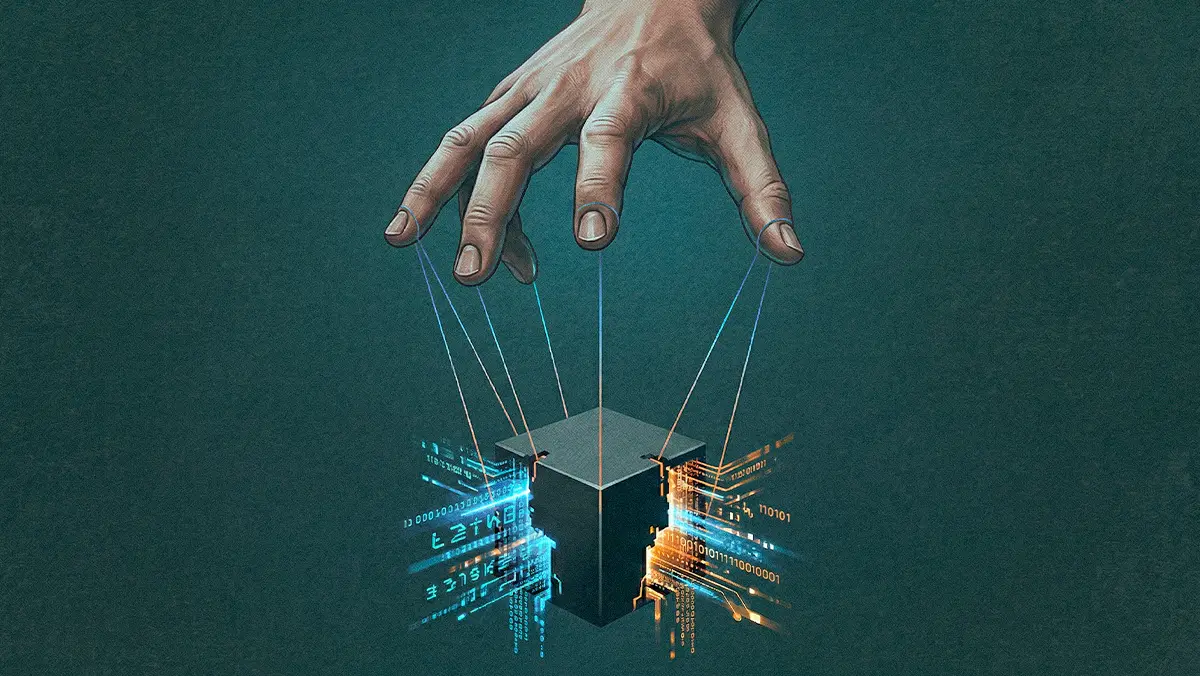

The security checklist: To combat these threats, the agencies urge organizations to meticulously track data provenance, use digital signatures to authenticate dataset changes, and leverage trusted infrastructure. Continuous risk assessments are also pushed, acknowledging that AI threats are constantly evolving, and specific defensive measures should span from quantum-resistant encryption to privacy-preserving techniques like data masking.

No silver bullets: The advisory details sophisticated attack vectors, like "split-view poisoning" where bad actors feed tainted information through a compromised but trusted web domain. While the guidance calls for screening all ingested data for 'malicious and inaccurate material,' some analysts, like those highlighted by CPO Magazine, note this is a monumental hurdle for the web-scale datasets fueling Large Language Models, though more feasible for AI with narrower data inputs.

he guidance underscores that as AI becomes deeply embedded in critical operations, from defense to infrastructure, maintaining robust data security is not a one-time fix but an ongoing, adaptive effort.

The push for secure AI data comes amid a broader mood of heightened alert. Experts at the 2025 RSA Conference emphasized that existing laws are already tackling AI-enabled crimes, even as new global regulations, particularly from the EU, aim to manage multiplying data risks from accelerated AI adoption. Meanwhile, U.S. federal and state governments are moving to restrict certain AI technologies affiliated with China, balancing national security with the need to foster domestic innovation.